13 Times Virtual Assistants Were Less Than Helpful

Updated April 25 2019, 7:09 p.m. ET

Virtual assistants like Alexa, Siri, and Google Assistant make our lives easier by helping us make grocery lists, create reminders, and even set the mood just by shouting as a tiny device. But nobody's perfect, not even artificial intelligence. Whether they can't understand what we've said or are simply stumped, sometimes our virtual assistants can offer up some confusing and hilarious results. Here are 13 times virtual assistants were absolutely no help at all.

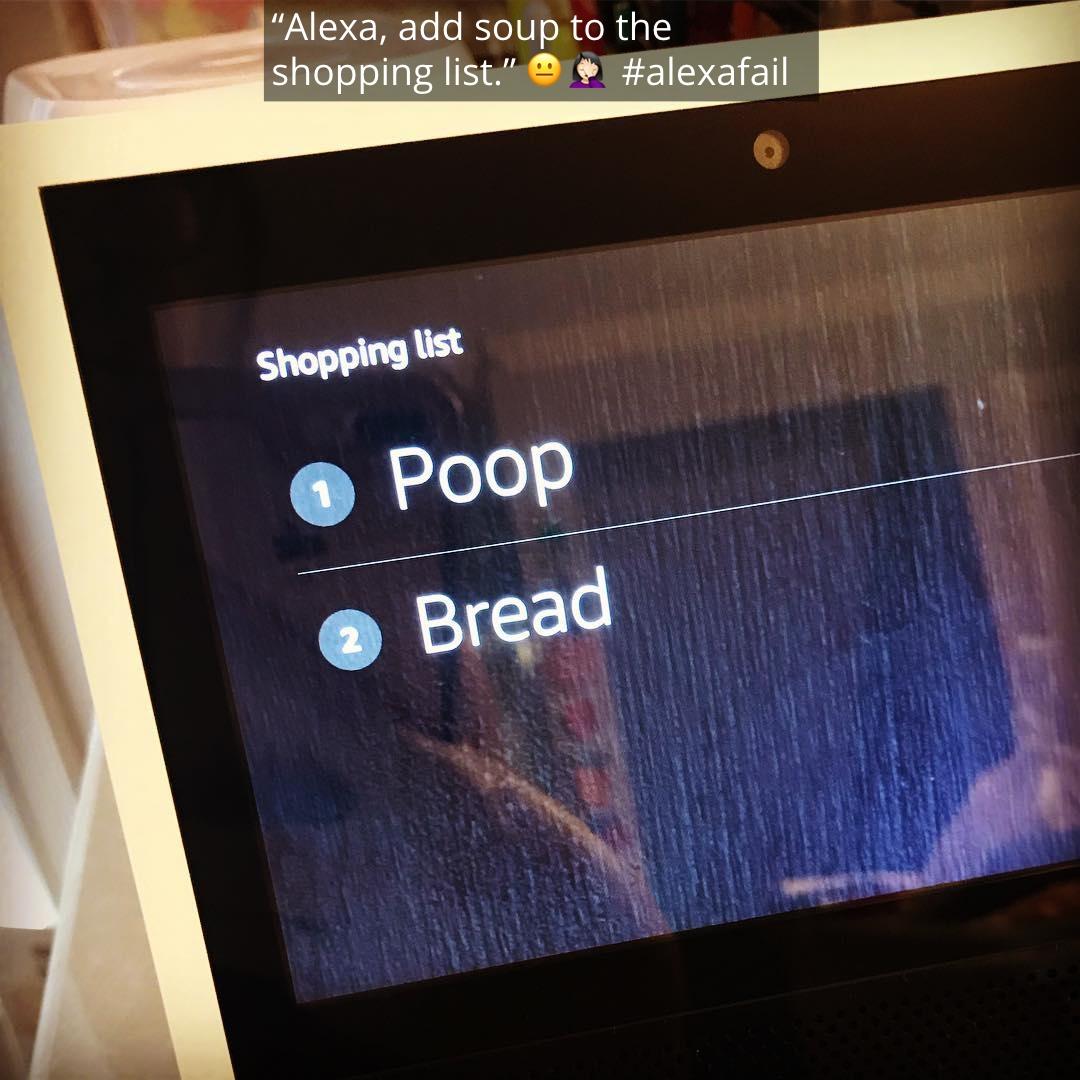

Remind me not to eat at their house.

Alexa, quick question: I know Amazon sells pretty much everything, but who the hell is selling poop? And who would want to buy it?

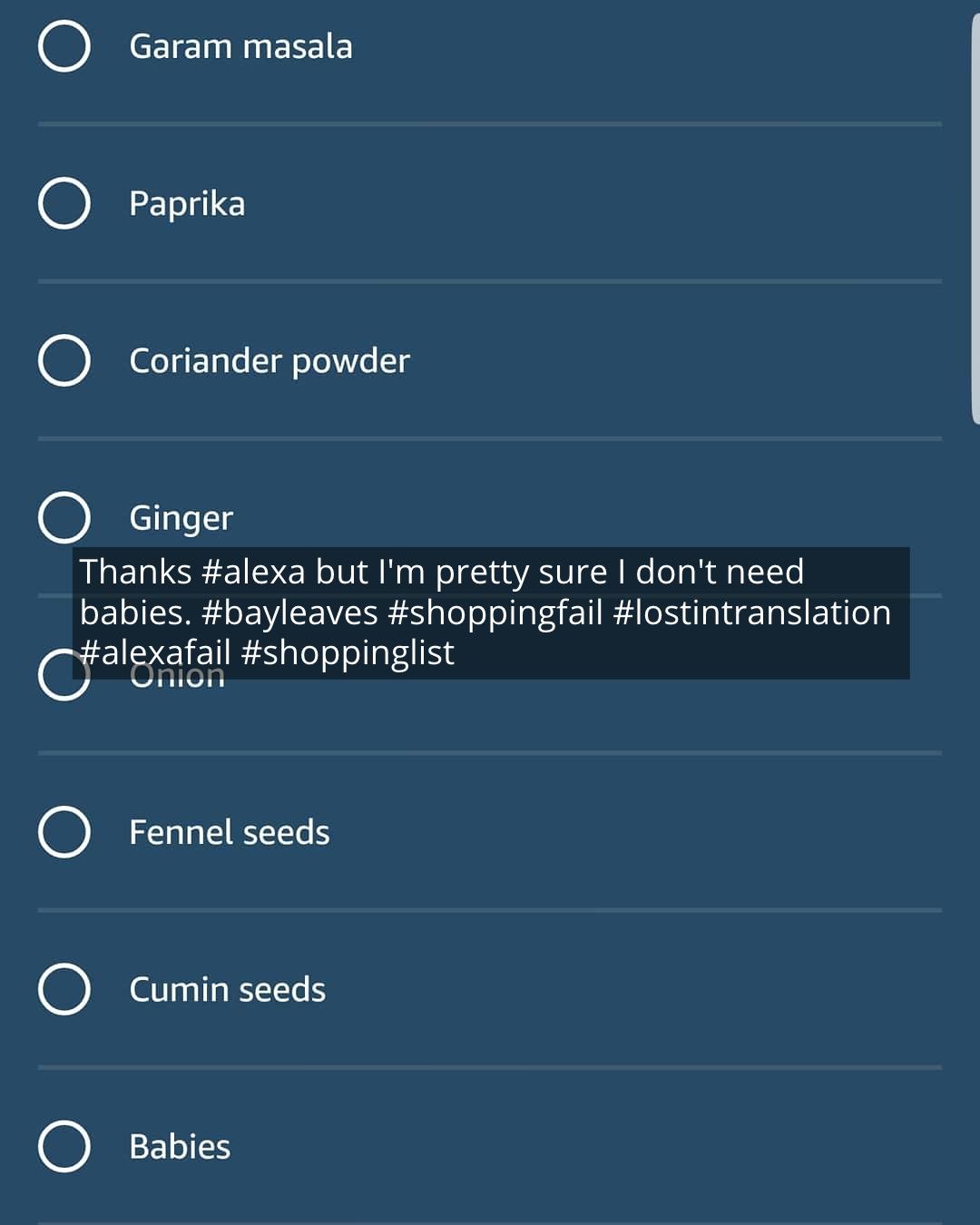

Hint hint.

I've heard of a biological clock ticking, but this is a whole new ballpark. Or, you know, Alexa just misheard "bay leaves" as "babies."

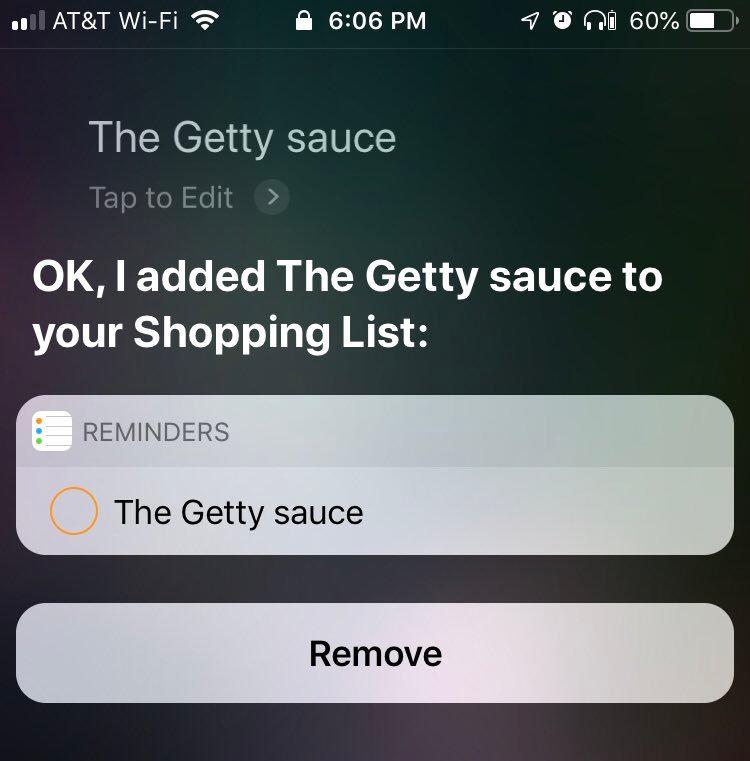

Close enough.

Sometimes it's clear what you were *trying* to remind yourself to do... even if what Siri hears is total nonsense.

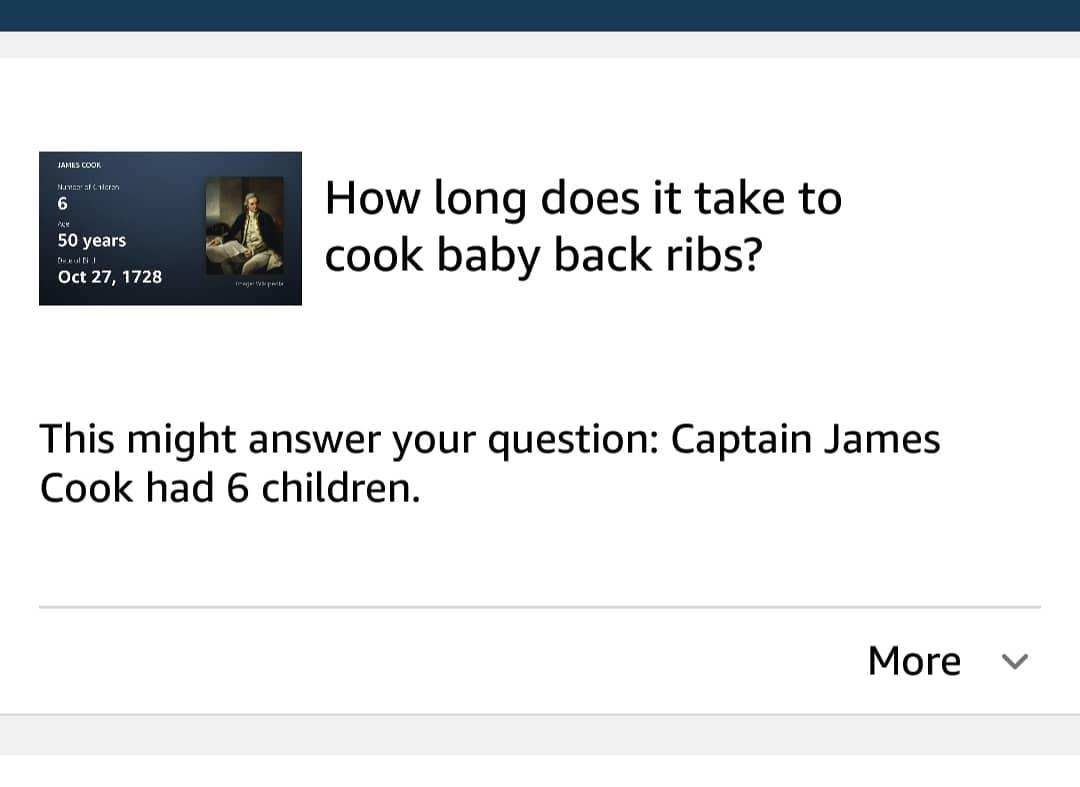

Interesting, yes...

...but helpful? Not so much. This user asked how long it takes to cook baby back ribs and instead got some interesting trivia about 18th century British explorer James Cook.

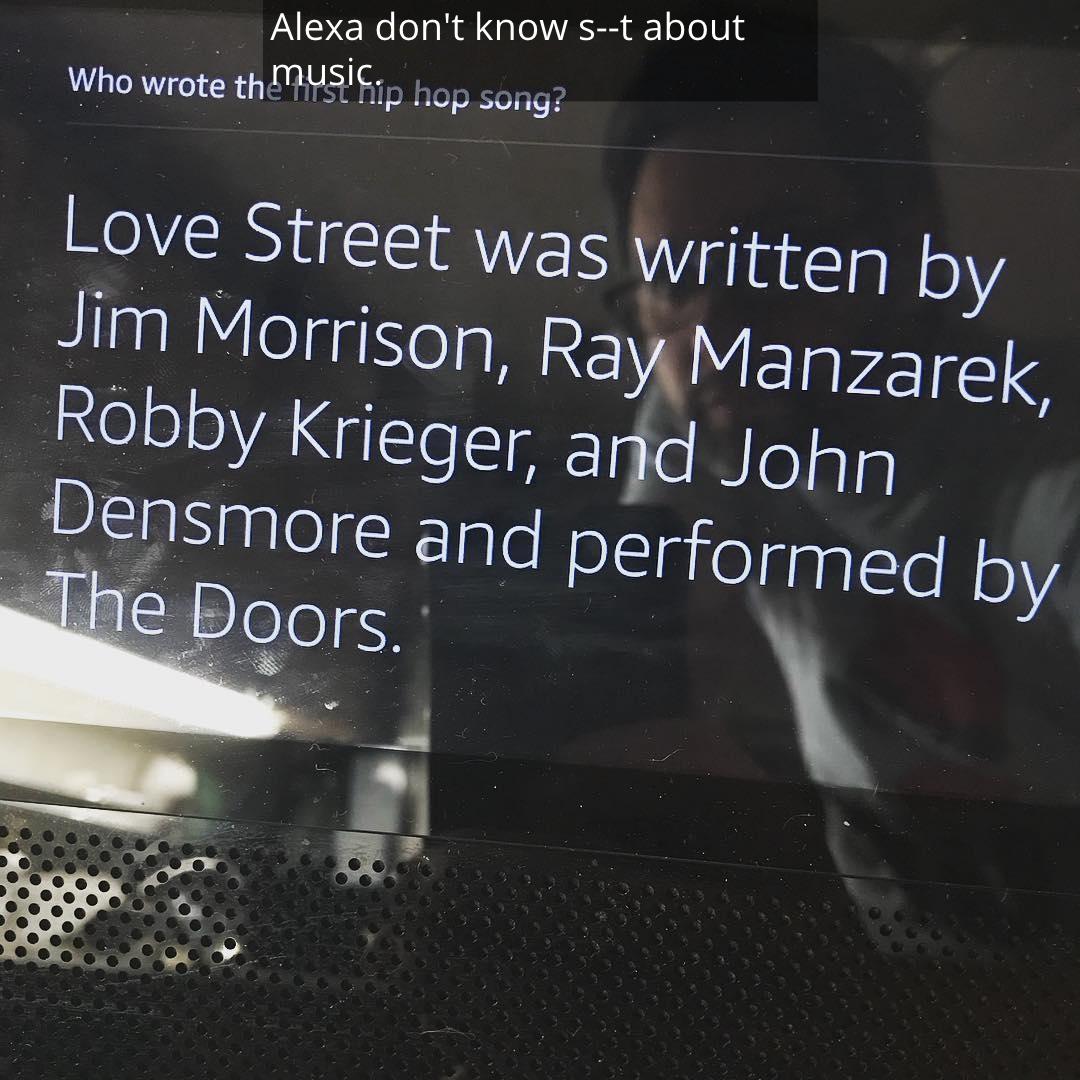

Alexa needs to take a music history class.

When this Alexa user asked about the first hip hop song, she spat out some facts about a ditty by The Doors. Granted, the song technically has a spoken word section in the middle, but there's not a single music historian who would classify that as hip hop.

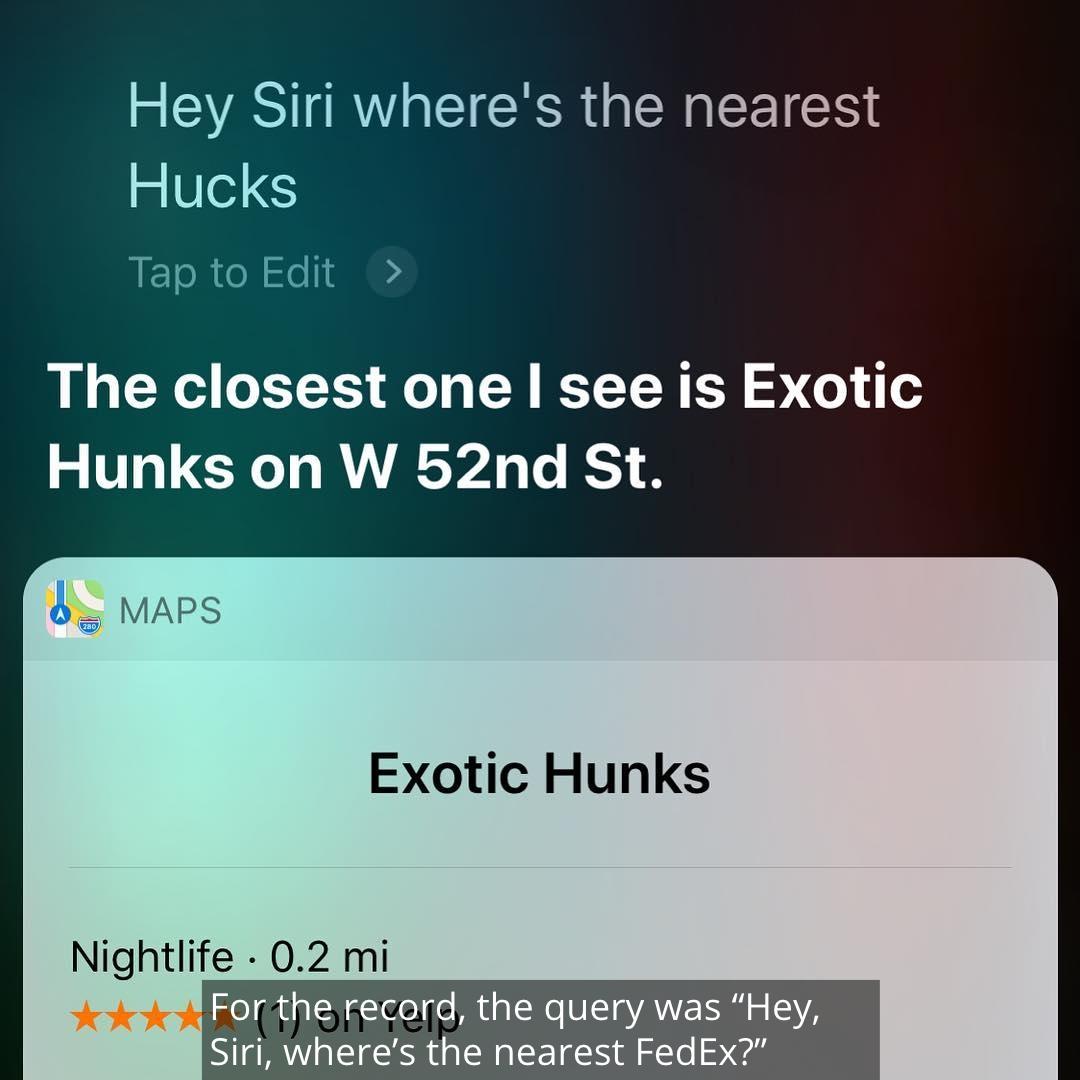

Double fail.

Not only did Siri completely misunderstand what this gentleman was saying — he was asking where the closest FedEx is — but it took "Hucks" and turned it into "hunks." That's a completely different kind of package delivery service, Siri.

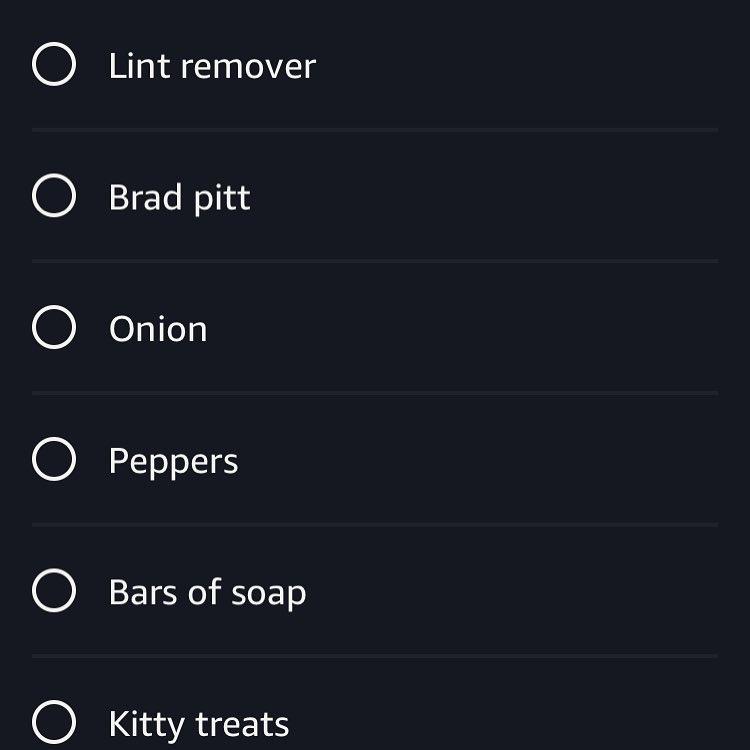

I like the way you're thinking, Alexa.

Apparently this shopper was trying to request "ketchup" be added to the grocery list. IDK, I wouldn't exactly be mad if I got Brad Pitt instead.

Good luck with that.

This sleepy dad tried to set a reminder to "feed the baby," and instead got a suggestion that 2 a.m. is the time to vanquish your infant. Also, don't babies come equipped with a built-in "reminder" function already without bringing Alexa into it?

Sassy and not at all helpful.

This user was actually asking where they could find "beer" near here. Clearly, Siri heard otherwise, but instead of actually answering where one might find an appropriate place to "get weird," the sassy assistant insisted she's "just trying to help you." How exactly, Siri? Where can we get weird?!

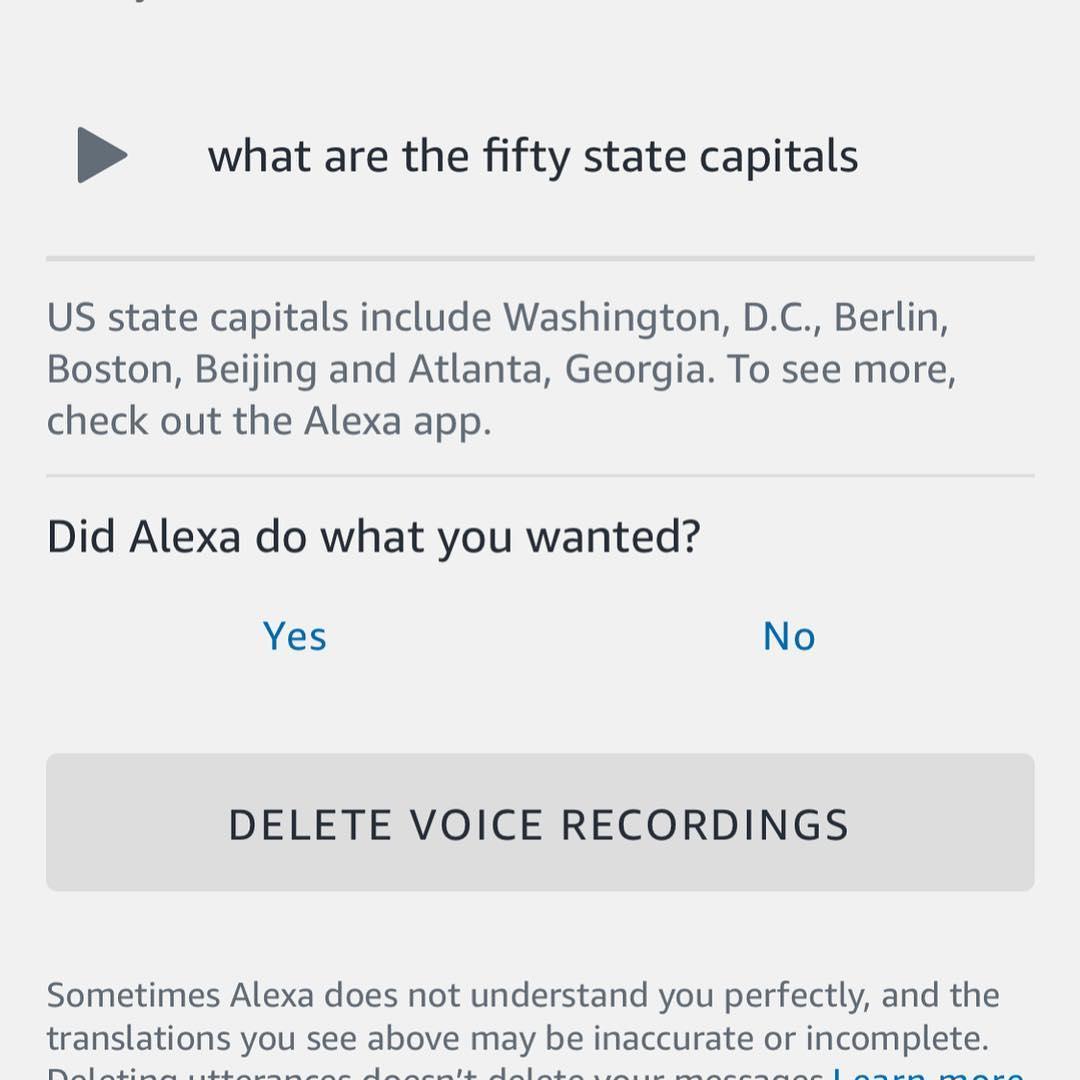

Don't crib off Alexa...

We've seen stories of kids using Alexa to cheat on their homework. Well, based on this answer to a question about U.S. geography, I wouldn't recommend it. When asked for the state capitals, it gave Berlin and Beijing among the answers. And while Washington, D.C. is the capital of the country, it doesn't belong to any state. Two out of five correct answers definitely wouldn't earn you a passing grade.

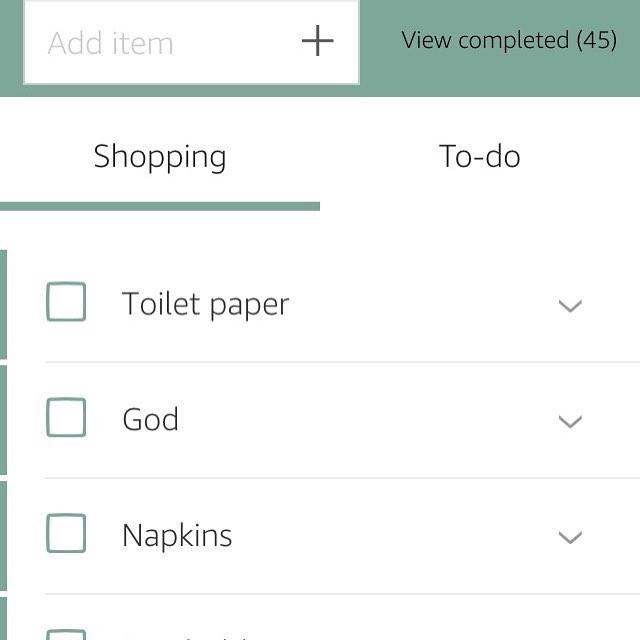

Wow, Amazon really has everything...

If you ever questioned whether there is a higher power, Alexa seems to be confirming not only the existence of God but that you can have her sent to your house with free two-day shipping.

Well, that's a relief.

On the one hand, it's a bummer that Alexa misunderstoof this user's command. On the other, I'm super glad she didn't start playing Kate Smith songs.

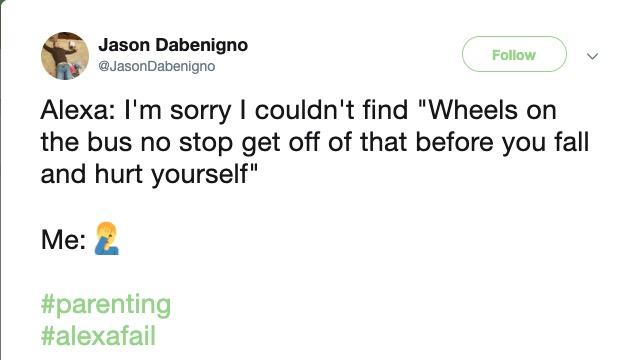

An Alexa fail every parent can relate to.

Has there every been a more accurate portrayal of what it means to be the parent of a toddler? Every waking moment is spent either keeping them entertained or keeping them from certain death.